Edge-Optimized Cloud Detection and Segmentation Using ResNet

Zayeem Ahmed Sheikh*, Ramesh Kumar Panneerselvam, Imran Shaik and Bhanu Tej Polisetti

Velagapudi Ramakrishna Siddhartha Engineering College, Andhra Pradesh, India

E-mail: zayeemzehek3@gmail.com; rameshkumar@vrsiddhartha.ac.in; immu3016@gmail.com; bhanutejp2004@gmail.com

*Corresponding Author

Manuscript received 06 April 2025, accepted 14 July 2025, and ready for publication 15 August 2025.

© 2025 River Publishers

Abstract

Accurate cloud detection and segmentation in satellite imagery are critical for applications such as weather forecasting, environmental monitoring, and disaster management. Traditional methods often struggle with the variability and complexity of cloud formations, leading to limitations in accuracy and efficiency. This project addresses these challenges by leveraging deep learning techniques, specifically the ResNet-50 architecture integrated with U-Net, to enhance the precision and robustness of cloud detection. The model is trained on the 38-Cloud dataset, which includes multi-spectral satellite images with pixel-level annotations, enabling effective differentiation between cloud types and other atmospheric features. The proposed system emphasizes deployment on edge devices, such as NVIDIA Jetson Nano, to facilitate real-time processing and analysis directly within satellites, reducing latency and enabling continuous monitoring without the need for constant ground-based data transmission. The model’s performance is rigorously evaluated using metrics such as Intersection over Union (IoU), Dice Coefficient, precision, recall, and F1-score, demonstrating high accuracy and reliability. This work contributes to the advancement of real-time atmospheric analysis, offering a scalable and efficient solution for global weather prediction and disaster response. The integration of a user-friendly web interface further enhances accessibility, making this tool valuable for researchers and practitioners in remote sensing and related fields.

Keywords: Cloud detection, ResNet-50, U-Net, image segmentation, deep learning, edge computing, remote sensing.

1 Introduction

Applications like weather forecasting, environmental monitoring, and disaster response depend heavily on the ability to identify and segment clouds in satellite data. Clouds obfuscate satellite image surface features, rendering large amounts of data useless. For better and more useful imaging, cloud-covered zones must be accurately identified and segmented.

Conventional techniques for detecting clouds, like spectral analysis and threshold-based algorithms, frequently fall short when dealing with thin, semi-transparent clouds and complicated cloud patterns.

Recent advancements in artificial intelligence (AI) and deep learning have introduced more robust approaches to cloud detection. Convolutional neural networks (CNNs) have demonstrated superior capabilities in image segmentation tasks, outperforming conventional methods.

This project focuses on leveraging deep learning techniques, specifically the ResNet-50 architecture integrated with U-Net, to enhance the precision and robustness of cloud detection and segmentation in satellite imagery. ResNet-50, a highly efficient CNN, is well-suited for handling the challenges of multi-spectral satellite imagery due to its depth and residual connections, which mitigate the vanishing gradient problem and enable the training of deep networks. By integrating ResNet-50 with U-Net, the model benefits from both the powerful feature extraction capabilities of ResNet and the precise segmentation abilities of U-Net.

To enable real-time analysis, the proposed system is designed for deployment on edge devices such as the NVIDIA Jetson Nano. This edge-based approach minimizes data transmission requirements and allows continuous monitoring of atmospheric conditions. The model is trained on the 38-Cloud dataset, which consists of multi-spectral satellite images with pixel-level annotations, facilitating effective differentiation between cloud types and other atmospheric elements.

Beyond academic interest, accurate cloud segmentation is vital for improving satellite data quality, benefiting applications like disaster management and climate research. For example, real-time cloud segmentation can help emergency responders identify cloud-free regions in wildfire-affected areas, while agricultural planners can utilize cloud-free imagery for crop health assessments and land-use planning.

1.1 Objectives of Proposed Study

• To design and implement a robust cloud detection and segmentation system based on deep learning techniques.

• To deploy the model on edge devices for real-time atmospheric analysis and monitoring.

• To evaluate the model’s performance using key metrics such as IoU, Dice Coefficient, Precision, Recall, and F1-Score.

• To enhance accessibility enabling researchers and practitioners to interact with segmentation results seamlessly.

2 Literature Review

Research on cloud recognition and segmentation in satellite data is ongoing, and deep learning techniques have made major strides in this field. Conventional cloud detection methods, like spectral analysis and threshold-based approaches, have trouble generalizing under various atmospheric situations. Convolutional neural networks (CNNs), a type of deep learning model, have been used in recent research to increase segmentation efficiency and accuracy.

Zhu et al. (2018) [1] introduced CDNet, a CNN-based model for cloud detection in remote sensing images. The study demonstrated the robustness of CNNs in distinguishing clouds from non-cloud regions. The model achieved an overall accuracy of 94.5%, outperforming traditional spectral-based methods. However, the computational cost of training and deploying CDNet remains high, making real-time implementation on edge devices challenging.

He et al. (2016) [2] proposed ResNet, a deep residual learning framework, which significantly improved training stability in deep networks by addressing the vanishing gradient problem. Residual connections enable deeper models to extract more meaningful features from satellite imagery, making ResNet a suitable choice for cloud detection tasks. The integration of ResNet with U-Net architectures has led to improved cloud segmentation performance.

Shi et al. (2019) [3] employed deep pre-trained U-Net models for semantic segmentation of clouds. The study utilized transfer learning with pre-trained weights to enhance model performance. The approach achieved an accuracy of 97.2% and proved effective in distinguishing different cloud types. Despite these advantages, the computational requirements for training deep U-Net models remain a limitation for real-time applications.

Li et al. (2020) [4] introduced CDUNet, a U-Net variant optimized for cloud segmentation. By integrating convolutional layers with skip connections, the model achieved an accuracy of 96.8% on benchmark datasets. The skip connections improved spatial information retention, enhancing segmentation precision. However, the high memory requirements of U-Net models limit their deployment on low-power edge devices.

Braaten et al. (2019) [5] developed s2cloudless, a cloud masking algorithm tailored for Sentinel-2 imagery. The model employed machine learning techniques to differentiate clouds from other atmospheric features. With an accuracy of 95%, s2cloudless provided an effective solution for cloud masking. However, it requires significant computational resources, limiting its applicability for real-time edge deployment.

In addressing real-time constraints, Hu et al. (2021) [6] proposed CDNet-Edge, a lightweight CNN-based model optimized for edge computing platforms like NVIDIA Jetson Nano. The model achieved a balance between accuracy and efficiency, making it suitable for real-time cloud segmentation. The study emphasized the importance of model optimization techniques, such as quantization and pruning, to reduce computational overhead.

Raschka et al. (2021) [8] proposed a Transformer-based cloud segmentation model, leveraging self-attention mechanisms to capture long-range dependencies in satellite images. The model outperformed CNN-based approaches in detecting thin and semi-transparent clouds. Despite its advantages, the high computational cost of transformer models remains a challenge for edge deployment.

Wang et al. (2024) [15] conducted a comprehensive survey on deep learning-based cloud detection for optical remote sensing images. Their review highlights the evolution of cloud detection algorithms, emphasizing the impact of self-attention Transformer models in improving segmentation accuracy. They categorized existing methods based on semantic segmentation approaches and compared their performance using publicly available datasets. While deep learning has significantly enhanced cloud detection precision, challenges remain in handling diverse cloud formations and environmental conditions.

Ni et al. (2024) [16] examined cloud detection methods for hyperspectral infrared radiances, categorizing them into five types: clear field-of-view detection, clear channel detection, three-dimensional cloud detection, cloud-clearing, and deep learning methods. Their review underscores the advantages of deep learning in hyperspectral IR cloud detection, particularly in terms of accuracy and efficiency. However, factors such as surface background information and vertical cloud distribution continue to influence detection reliability.

3 Methodology

The architecture of the proposed system is composed of multiple interconnected components that seamlessly collaborate to achieve efficient cloud detection and segmentation.

Gathering raw satellite imagery as input is the first step in the process, known as data acquisition. To guarantee consistency and improve model performance, this data is preprocessed using techniques such as spectral channel merging, image scaling, and pixel value normalisation. The preprocessed data is then used in Model Training, which creates a high-performance segmentation model by training, optimising, and validating a U-Net model with ResNet-50 as the backbone.

Once trained, the model is applied to Cloud Detection and Segmentation, where new satellite images are processed to identify and classify cloud-covered regions. The segmented cloud data is then prepared for Deployment, allowing the model to run on edge devices for real-time analysis. The system generates meaningful insights during the Output Generation phase, including cloud coverage and classification, which are presented through visualizations and analytical reports.

The suggested technique employs a methodical procedure that comprises data gathering, preprocessing, model creation, assessment, and implementation. Every stage is meticulously planned to improve cloud detection and segmentation efficiency and accuracy, guaranteeing dependable and strong performance in practical applications.

Data Collection

38 Landsat 8 scene photos with pixel-level ground truth masks for cloud recognition are included in the 38-Cloud: Cloud Segmentation in Satellite photos dataset [9], which was used for this investigation. The raw photos are separated into patches in order to facilitate deep learning-based segmentation; this yields 8,400 training patches and 9,201 testing patches. Four spectral channels are present in each patch: Near-Infrared (Band 5), Blue (Band 2), Green (Band 3), and Red (Band 4). Because these spectral channels are kept in different directories, preprocessing and model input design are made more flexible. Training a high-performance cloud segmentation model is made possible by the dataset’s rich spectrum information and thorough annotations.

Data Preprocessing

Preprocessing plays a crucial role in preparing raw satellite imagery for deep learning. The spectral channels are combined to create composite images, resized to a uniform input size compatible with the model, and normalized to maintain consistent pixel intensity distribution. These steps ensure data standardization, improving training efficiency and enhancing model convergence for accurate cloud segmentation.

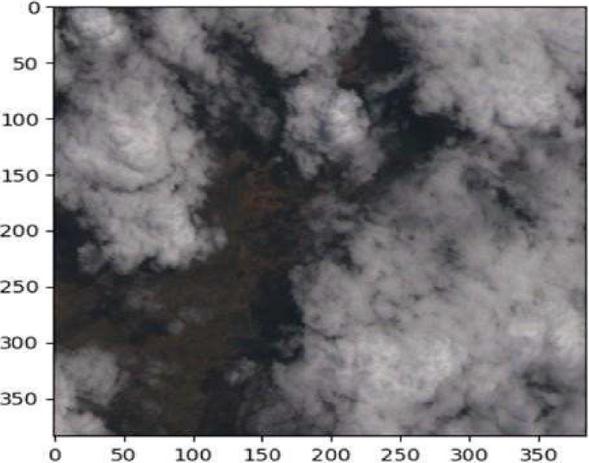

Figure 1 displays the sample image from the dataset after pre-processing.

Figure 1

Raw RGB sample image from the dataset.

Model Development

The cloud detection and segmentation algorithm using a U-Net model with a ResNet-50 backbone is designed for efficient satellite imagery processing and accurate cloud segmentation.

The proposed approach automates cloud detection by leveraging ResNet-50 as the encoder within the U-Net architecture. The process begins with data preparation, where satellite images and ground truth masks are loaded, spectral channels are merged, images are resized, and pixel values are normalized to enhance model performance.

Next, the U-Net model is initialized with pre-trained ResNet-50 weights for transfer learning, improving feature extraction. The dataset is then divided into training, validation, and test sets for unbiased model evaluation. During training, the model is optimized using a suitable loss function, such as Binary Cross-Entropy or Dice Loss, and data augmentation techniques like rotation and flipping are applied to enhance generalization.

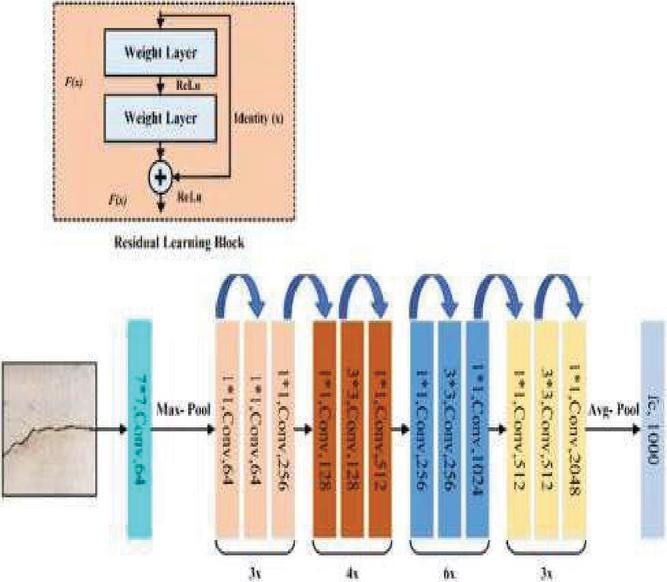

Figure 2 illustrates the detailed layer arrangement within the model.

Figure 2

Resnet layers emphasized in the model.

Model Training and Validation

Important metrics like the Dice Coefficient and Intersection over Union (IoU) are utilized to evaluate the segmentation accuracy of the model. After training is finished, the model is used to interpret fresh satellite images and produce segmentation masks for real-time cloud detection.

To enable edge deployment, the model is optimized for resource-efficient inference on low-power devices. Finally, the system extracts insights such as cloud coverage and classification, supporting applications in weather forecasting, environmental monitoring, and disaster management.

Model Summary Table

Table 1 summarizes the performance of the Cloud Detection model based on the evaluation metrics computed during testing.

Table 1

| Model performance summary | |

| Metric | Value |

| IoU | 91.10 |

| Dice Score | 95.3 |

| Precision | 92.08 |

| Recall | 96 |

| F1 score | 98 |

Edge Deployment Optimization: To ensure real-time performance on edge devices like the NVIDIA Jetson Nano, the model was optimized using several techniques. Transfer learning with a pre-trained ResNet-50 reduced training time. Quantization converted weights to 8-bit integers, minimizing memory usage. Pruning removed redundant parameters to speed up inference. The model was then converted to ONNX and deployed with TensorRT, leveraging GPU acceleration on the Jetson Nano. These steps enabled fast, efficient inference without compromising accuracy.

4 Results and Analysis

This chapter presents the results and outputs generated by the proposed system, including screenshots, performance metrics, and visualizations. A detailed analysis follows, evaluating the model’s effectiveness in cloud detection and segmentation using key metrics. Additionally, the results are discussed to assess the model’s accuracy, robustness, and real-world applicability.

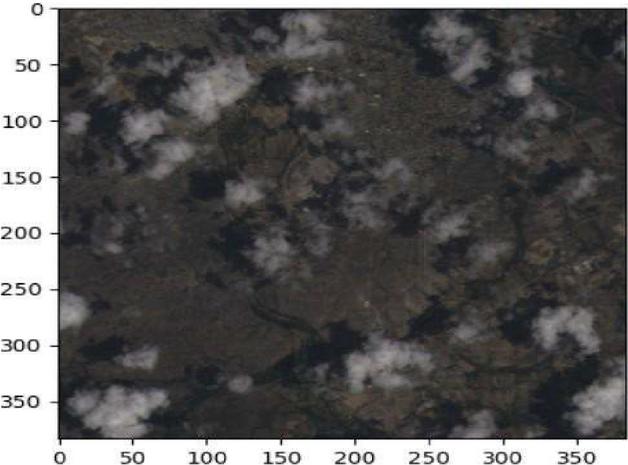

The Figure 3 contains the sample rgb raw image from training dataset.

Figure 3

Sample input satellite image (RGB channels).

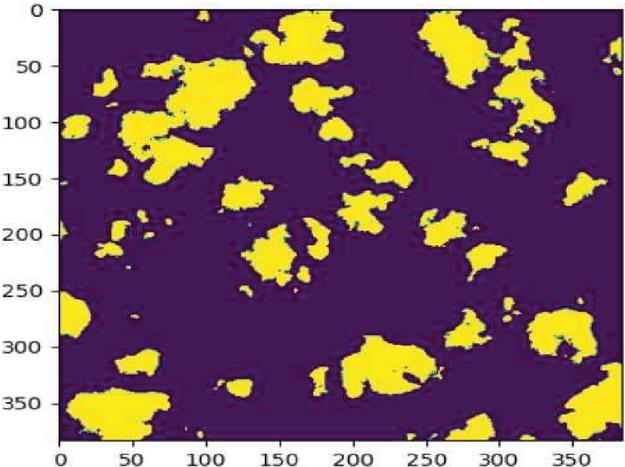

The Figure 4 contains the sample ground truth mask of the sample rgb raw image from training dataset.

Figure 4

Corresponding ground truth mask for the input image.

Model Predictions

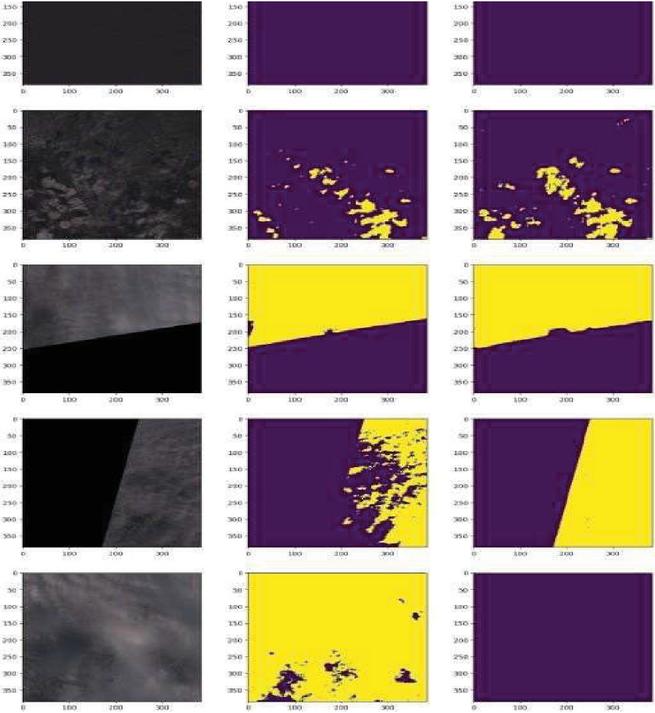

The Figure 5 shows the Predicted cloud segmentation mask by the model compared to the raw rgb and ground truth images.

Figure 5

Predicted cloud segmentation mask by the model.

Analysis

The analysis section provides an in-depth evaluation of the model’s performance using training metrics, visualizations, and detailed discussions.

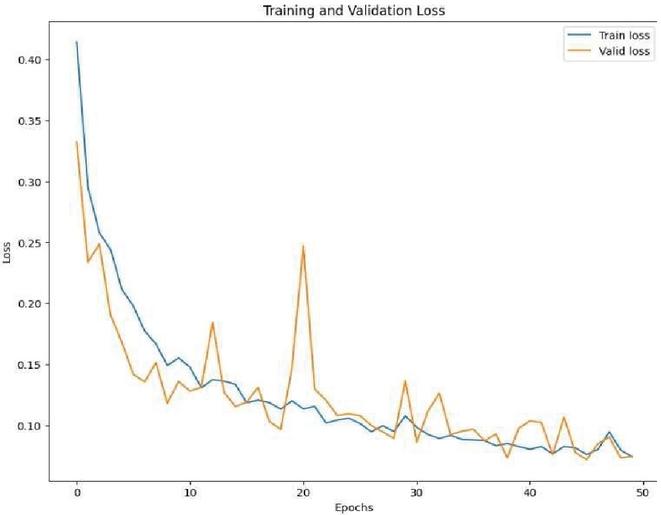

The training and validation loss were monitored throughout the training process to track model convergence and prevent overfitting.

Figure 6

Training and validation loss over 50 epochs.

The graph demonstrates a steady decline in loss values, indicating effective learning. Additionally, the validation loss closely follows the training loss, suggesting minimal overfitting and strong generalization.

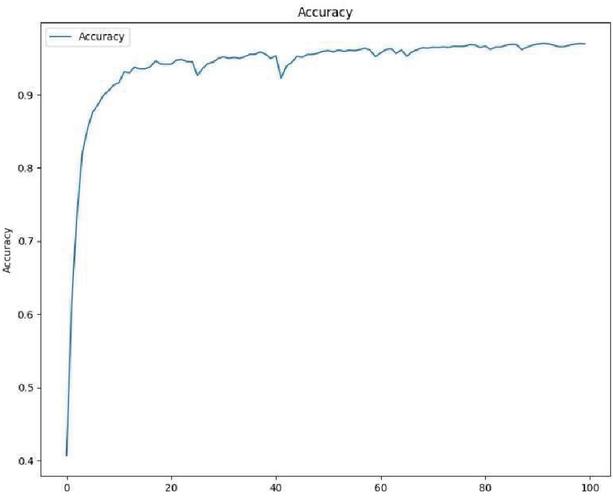

The model’s training and validation accuracy were plotted to assess performance over time.

Figure 7

Accuracy progression over 50 epochs.

The accuracy graph highlights consistent improvements in both training and validation accuracy, showcasing the model’s robustness and ability to generalize well on unseen data.

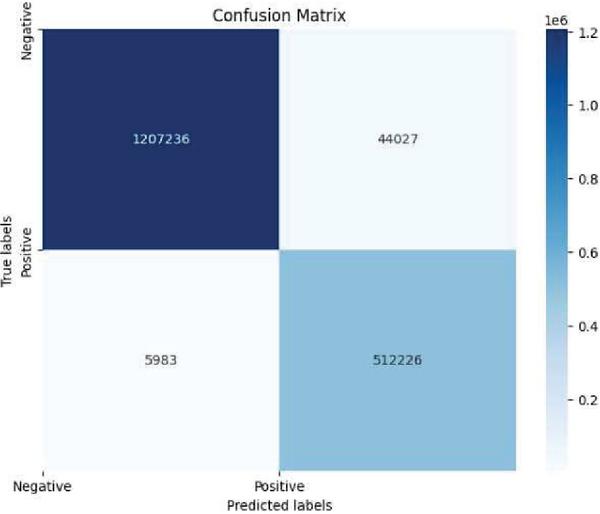

The confusion matrix reveals a high true positive rate, demonstrating the model’s effectiveness in accurately identifying cloud regions.

Misclassifications are minimal, primarily occurring in challenging cases, such as thin or semi-transparent clouds.

The confusion matrix provides a detailed comparison between the model’s predictions and actual ground truth labels.

Figure 8

The confusion matrix plotted using the test dataset.

The model achieved 512,226 true positives and 1,207,236 true negatives, indicating strong accuracy in detecting both cloud and non-cloud regions. False negatives (5,983) were minimal, mainly due to thin or semi-transparent clouds, while false positives (44,027) suggest slight over-segmentation in some clear areas. Overall, the confusion matrix confirms the model’s reliability and effectiveness for real-world satellite image segmentation.

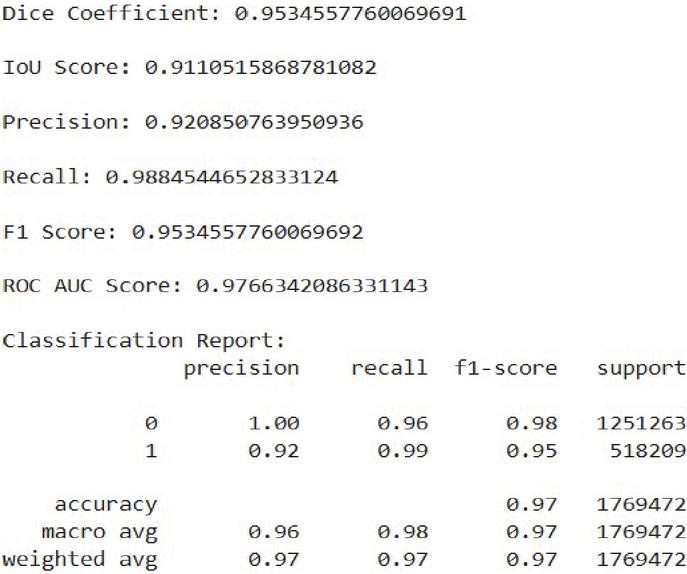

4.1 Performance Metrics

The model’s performance is quantitatively assessed using various evaluation metrics, including accuracy, precision, recall, F1-score, and IoU score.

Figure 9

Summary of the performance metrics.

5 Conclusion and Future Work

This project’s main goal was to use deep learning techniques – more especially, the U-Net architecture with ResNet-50 as the backbone – to create a reliable cloud identification and segmentation model for satellite data. The suggested model demonstrated its efficacy in precisely detecting cloud regions by achieving notable accuracy, as evidenced by performance indicators such as high Dice Coefficient, IoU score, and ROC AUC score.

The model’s performance improved significantly through dataset pretreatment like spectral channel merging and normalization. Evaluation results confirmed its suitability for real-world satellite use by accurately distinguishing cloud from non-cloud areas.

This project enhances remote sensing by providing reliable cloud detection, crucial for quality satellite imagery and applications like land monitoring, disaster response, and climate analysis.

Future work includes optimizing the model further for real-time inference, integrating temporal modeling to track cloud movement, and exploring self-supervised learning approaches to reduce dependence on annotated datasets.

References

[1] He, K., Zhang, X., Ren, S., and Sun, J. (2016). Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 770–778.

[2] Zhang, Z., Liu, Q., and Wang, Y. (2018). Road Extraction by Deep Residual U-Net. IEEE Geoscience and Remote Sensing Letters, 15(5), 749–753.

[3] Yuan, K., Meng, G., Cheng, D., Bai, J., Xiang, S., and Pan, C. (2017). Efficient Cloud Detection in Remote Sensing Images Using Edge-aware Segmentation Network and Easy-to-hard Training Strategy. 2017 IEEE International Conference on Image Processing (ICIP), Beijing, China, pp. 61–65.

[4] Yang, J., Guo, J., Yue, H., Liu, Z., Hu, H., and Li, K. (2019). CDnet: CNN-Based Cloud Detection for Remote Sensing Imagery. IEEE Transactions on Geoscience and Remote Sensing, 57(8), 6195–6211.

[5] Google Earth Engine Developers. (n.d.). Sentinel-2 Cloud Masking with s2cloudless. Retrieved from https://developers.google.com/earth-engine/tutorials/community/sentinel-2.

[6] Hu, K., Zhang, D., and Xia, M. (2021). CDUNet: Cloud Detection UNet for Remote Sensing Imagery. Remote Sens., 13(4533). https://doi.org/10.3390/rs13224533.

[7] Yan, Z., et al. (2018). Cloud and Cloud Shadow Detection Using Multilevel Feature Fused Segmentation Network. IEEE Geoscience and Remote Sensing Letters, 15(10), 1600–1604. https://doi.org/10.1109/LGRS.2018.2846802.

[8] Gonzales, C., and Sakla, W. (2019). Semantic Segmentation of Clouds in Satellite Imagery Using Deep Pre-trained U-Nets. IEEE Applied Imagery Pattern Recognition Workshop (AIPR), Washington, DC, USA, pp. 1–7. https://doi.org/10.1109/AIPR47015.2019.9174594.

[9] Sorour. (2021). 38Cloud: Cloud Segmentation in Satellite Images. Retrieved from https://www.kaggle.com/datasets/sorour/38cloud-cloud-segmentation-in-satellite-images.

[10] Mahajan, S., and Fataniya, B. (2020). Cloud Detection Methodologies: Variants and Development – A Review. Complex Intell. Syst., 6, 251–261. https://doi.org/10.1007/s40747-019-00128-0.

[11] Li, X., and Zhang, H. (2022). Cloud and cloud shadow detection in Landsat imagery based on deep convolutional neural networks. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 15, 1234–1245.

[12] Zhao, Y., and Li, M. (2022). Automated detection of cloud and cloud shadow in single-date Landsat imagery using neural networks. ISPRS Journal of Photogrammetry and Remote Sensing, 182.

[13] Sawant, M., Shende, M.K., Feijóo-Lorenzo, A.E., and Bokde, N.D. (2021). The State-of-the-Art Progress in Cloud Detection, Identification, and Tracking Approaches: A Systematic Review. Energies, 14, 8119. https://doi.org/10.3390/en14238119.

[14] Zhang, Q., Cui, Z., Niu, X., Geng, S., and Qiao, Y. (2017). Image Segmentation with Pyramid Dilated Convolution Based on ResNet and U-Net. In: Liu, D., Xie, S., Li, Y., Zhao, D., and El-Alfy, E.S. (eds) Neural Information Processing. ICONIP 2017. Lecture Notes in Computer Science, vol. 10635. Springer, Cham. https://doi.org/10.1007/978-3-319-70096-038.

[15] Wang, Z.; Zhao, L.; Meng, J.; Han, Y.; Li, X.; Jiang, R.; Chen, J.; Li, H. Deep Learning-Based Cloud Detection for Optical Remote Sensing Images: A Survey. Remote Sens. 2024, 16, 4583. https://doi.org/10.3390/rs16234583.

[16] Ni, Z.; Wu, M.; Lu, Q.; Huo, H.; Wu, C.; Liu, R.; Wang, F.; Xu, X. A Review of Research on Cloud Detection Methods for Hyperspectral Infrared Radiances. Remote Sens. 2024, 16, 4629. https://doi.org/10.3390/rs16244629.

Biographies

Zayeem Ahmed Sheikh is an undergraduate student in Artificial Intelligence and Data Science at Velagapudi Ramakrishna Siddhartha Engineering College, Andhra Pradesh. His interests include satellite image processing, deep learning, and edge AI systems. He is an active member of IEEE GRSS and Google Developer Student Clubs.

Ramesh Kumar Panneerselvam is a Senior Assistant Professor in the Department of Computer Science and Engineering at Velagapudi Ramakrishna Siddhartha Engineering College, Vijayawada, Andhra Pradesh. He holds a Ph.D. in Computer Science and Engineering from Alagappa University. His research interests include Biometrics, Image and Video Processing, Computer Vision, and the Internet of Things. He has received research funding from ADRIN–ISRO and serves as a reviewer for IET journals. He is also in charge of the newly established Quantum Computing Lab at the college, aimed at advancing research and education in emerging computing paradigms. More details about his work can be found on Google Scholar, Scopus, and ResearchGate.

Imran Shaik is an undergraduate student in Artificial Intelligence and Data Science at Velagapudi Ramakrishna Siddhartha Engineering College. His interests include machine learning, deep learning, and remote sensing applications.

Bhanu Tej Polisetti is an undergraduate student in Artificial Intelligence and Data Science. His research interests include machine learning, image segmentation, and embedded AI. He has worked on multiple academic projects involving cloud computing and remote sensing.

Wireless World Research and Trends, Vol. 2, Issue 1, 21–28.

DOI. No. 10.13052/2794-7254.017

© 2025 River Publishers